Documentation Index

Fetch the complete documentation index at: https://docs.agentmark.co/llms.txt

Use this file to discover all available pages before exploring further.

Quickstart

npm create agentmark does the absolute minimum bootstrap — writes agentmark.json, creates an empty agentmark/ directory, installs the AgentMark agent skill into your IDE, and hands off to your AI tool. The AI tool reads your project, asks the docs MCP for the right integration pattern, and wires the SDK into your existing code. No template menu, no opinionated scaffolding.

Prerequisites

- Node.js 18+

- An AI-tool-aware editor: Claude Code, Cursor, VS Code (Copilot Chat), or Zed

- An LLM provider API key (OpenAI, Anthropic, etc.) for the model you want to run

Step 1: Bootstrap

Run from inside your project directory (or pass a folder name to scaffold a fresh one):Step 2: Tell your AI tool to integrate

Open your project in Claude Code, Cursor, VS Code, or Zed and send the agent this message:Set up AgentMark in this project.The AgentMark skill takes over. It:

- Detects your project’s framework (Next.js, FastAPI, Hono, plain Node, etc.)

- Queries the docs MCP for the right integration recipe

- Proposes a concrete plan back to you — packages to install, where the client file goes, what your first prompt looks like

- After you confirm, installs the SDK, writes the client (

agentmark.client.ts/agentmark_client.py), scaffolds a first prompt, and smoke-tests it

Step 3: Add your provider key

The agent will tell you which env var to set for the model it picked. For OpenAI’sgpt-4o-mini (the common default) that’s:

Step 4: Run your first prompt

- Local

- Cloud

Start the dev server (keep it running in a separate terminal):Then run the prompt the agent scaffolded (the agent will tell you the path; The CLI prints the model output, token counts, cost estimate, and a

chat.prompt.mdx is the conventional default):📊 View trace URL you can open in the browser for the full span tree.The dev server listens on ports

9418 (API), 9417 (webhook), and 3000 (UI app). Override with --api-port / --webhook-port / --app-port if you need different ports.Step 5: Run an experiment

An experiment runs a prompt against a dataset and scores each row. Add atest_settings block to your prompt’s frontmatter pointing at a .jsonl dataset (see Datasets for the row shape), then:

--threshold — wire that into CI for prompt regression gating.

What’s in your project after bootstrap

| File | Source | Purpose |

|---|---|---|

agentmark.json | CLI | Project config — agentmarkPath, version, models, scores |

agentmark/.gitkeep | CLI | Empty prompts directory (drop .prompt.mdx files here) |

.mcp.json (and per-IDE configs) | CLI | MCP wiring — docs MCP, agentmark-mcp (Cloud), agentmark-local (dev) |

.agents/skills/agentmark/ | CLI (via npx skills add) | Agent skill that knows AgentMark — teaches Claude Code / Cursor / etc. |

agentmark.client.ts (or _client.py) | Skill | Configured SDK client — added when you ask the AI tool to integrate |

Your first .prompt.mdx | Skill | Scaffolded by the AI tool, named for your use case |

.env | You | Provider API key(s); AGENTMARK_API_KEY / AGENTMARK_APP_ID for Cloud |

Next steps

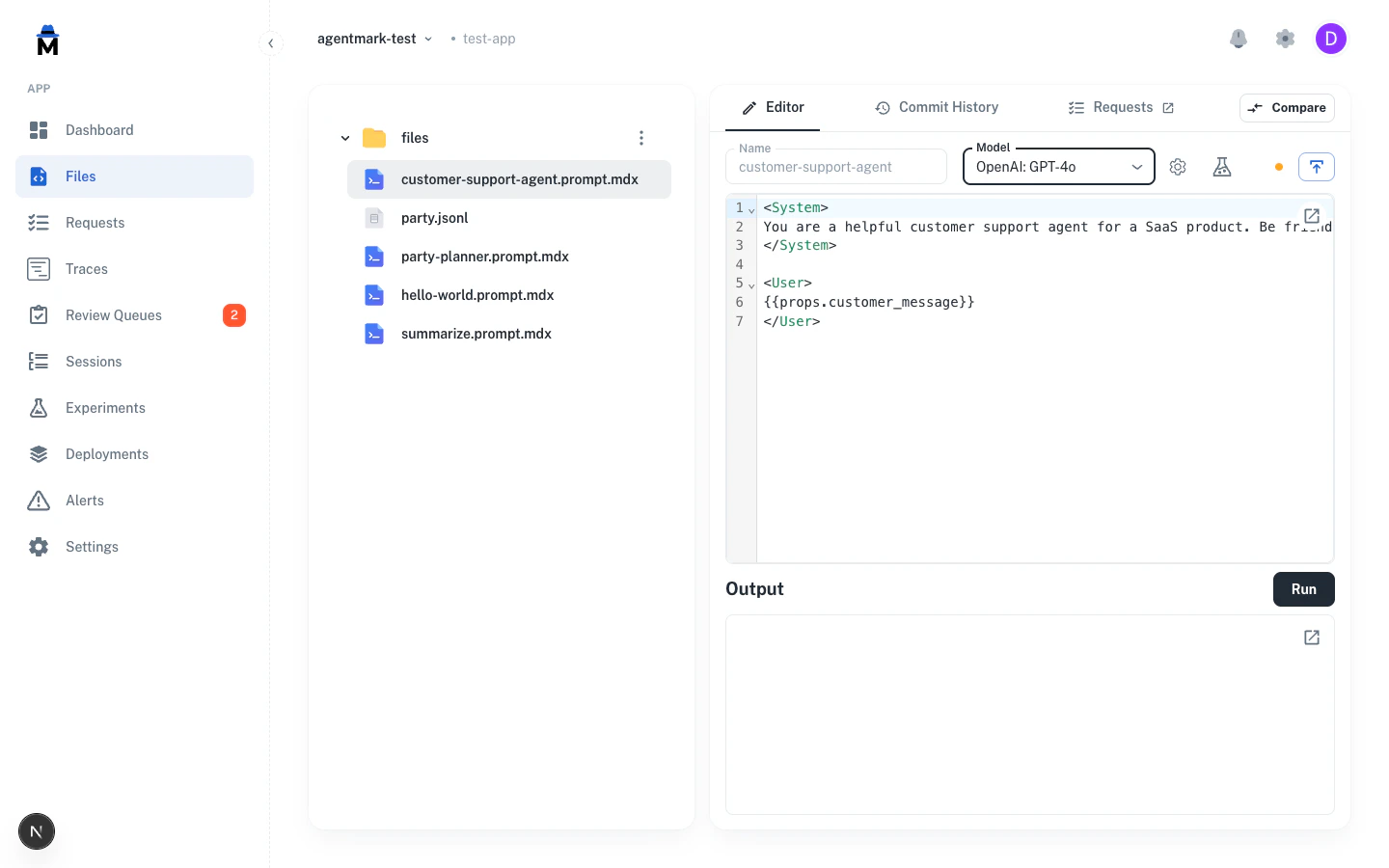

Build Prompts

Author

.prompt.mdx files: text, object, image, speechExample prompts

Copy-paste starters for all four generation types

Evaluate

Test prompts with datasets + evaluators; gate CI on regressions

Observe

Traces, sessions, cost-and-token tracking

Integrations

Vercel AI SDK, Mastra, Claude Agent SDK, Pydantic AI

Deploy

Git-based deploys to AgentMark Cloud

Have Questions?

We’re here to help! Choose the best way to reach us:

- Email us at hello@agentmark.co for support

- Schedule an Enterprise Demo to learn about our business solutions