Documentation Index

Fetch the complete documentation index at: https://docs.agentmark.co/llms.txt

Use this file to discover all available pages before exploring further.

Tools allow your prompts to call external functions — web searches, calculations, API calls, and more. Agents use tools across multiple LLM calls to solve complex tasks.

Creating tools

Tools are defined using the AI SDK’s tool() function. Each tool includes a description, a Zod schema for its input, and an execute function:

The examples below use the AI SDK v5 signature (inputSchema:). AI SDK v4 used parameters: — do not mix them. The @agentmark-ai/ai-sdk-v5-adapter package requires v5.

import { tool } from "ai";

import { z } from "zod";

const calculateTool = tool({

description: "Performs basic arithmetic calculations",

inputSchema: z.object({

expression: z.string().describe("The mathematical expression to evaluate"),

}),

execute: async ({ expression }) => {

// Safe arithmetic — swap in a real expression parser for production.

const result = Function(`"use strict"; return (${expression})`)();

return { result };

},

});

Passing tools to the client

Pass tools to createAgentMarkClient via the tools option — a plain object keyed by name:

import { createAgentMarkClient, VercelAIModelRegistry } from "@agentmark-ai/ai-sdk-v5-adapter";

import { openai } from "@ai-sdk/openai";

import { tool } from "ai";

import { z } from "zod";

const modelRegistry = new VercelAIModelRegistry();

modelRegistry.registerProviders({ openai });

const calculateTool = tool({

description: "Performs basic arithmetic calculations",

inputSchema: z.object({

expression: z.string().describe("The mathematical expression to evaluate"),

}),

execute: async ({ expression }) => {

const result = Function(`"use strict"; return (${expression})`)();

return { result };

},

});

const agentmark = createAgentMarkClient({

modelRegistry,

tools: {

calculate: calculateTool,

},

});

Tool configuration in frontmatter

Reference tools by name in your prompt’s frontmatter. The tool names must match the keys in your tools object:

---

name: calculator

text_config:

model_name: gpt-4

tools:

- calculate

---

<System>

You are a math tutor that can perform calculations. Use the calculate tool when you need to compute something.

</System>

<User>What's 235 * 18 plus 42?</User>

MCP tools in frontmatter

Reference Model Context Protocol tools directly using mcp://{server}/{tool}:

---

name: mcp-example

text_config:

model_name: gpt-4

tools:

- mcp://docs/web-search

- summarize

---

<System>

Use the web-search tool to look up relevant documentation when needed.

Use the summarize tool to condense content into a short summary.

</System>

<User>

Find the page that explains MCP integration and summarize it in 2 sentences.

</User>

mcp://docs/web-search resolves to the MCP server named docs, tool web-searchsummarize is a tool provided via the tools option in createAgentMarkClient- Use

mcp://docs/* to include every tool exported by a server

See MCP integration for details on configuring MCP servers.

Agents

Enable multi-step agent workflows by setting max_calls. The SDK automatically handles multiple LLM calls, passing tool results back until the task is complete:

---

name: travel-agent

text_config:

model_name: gpt-4

max_calls: 3

tools:

- search_flights

- check_weather

---

<System>

You are a helpful travel assistant that can search flights and check weather conditions.

When helping users plan trips:

1. Search for available flights

2. Check the weather at the destination

3. Make recommendations based on both flight options and weather

</System>

<User>

I want to fly from San Francisco to New York next week. Can you help me plan my trip?

</User>

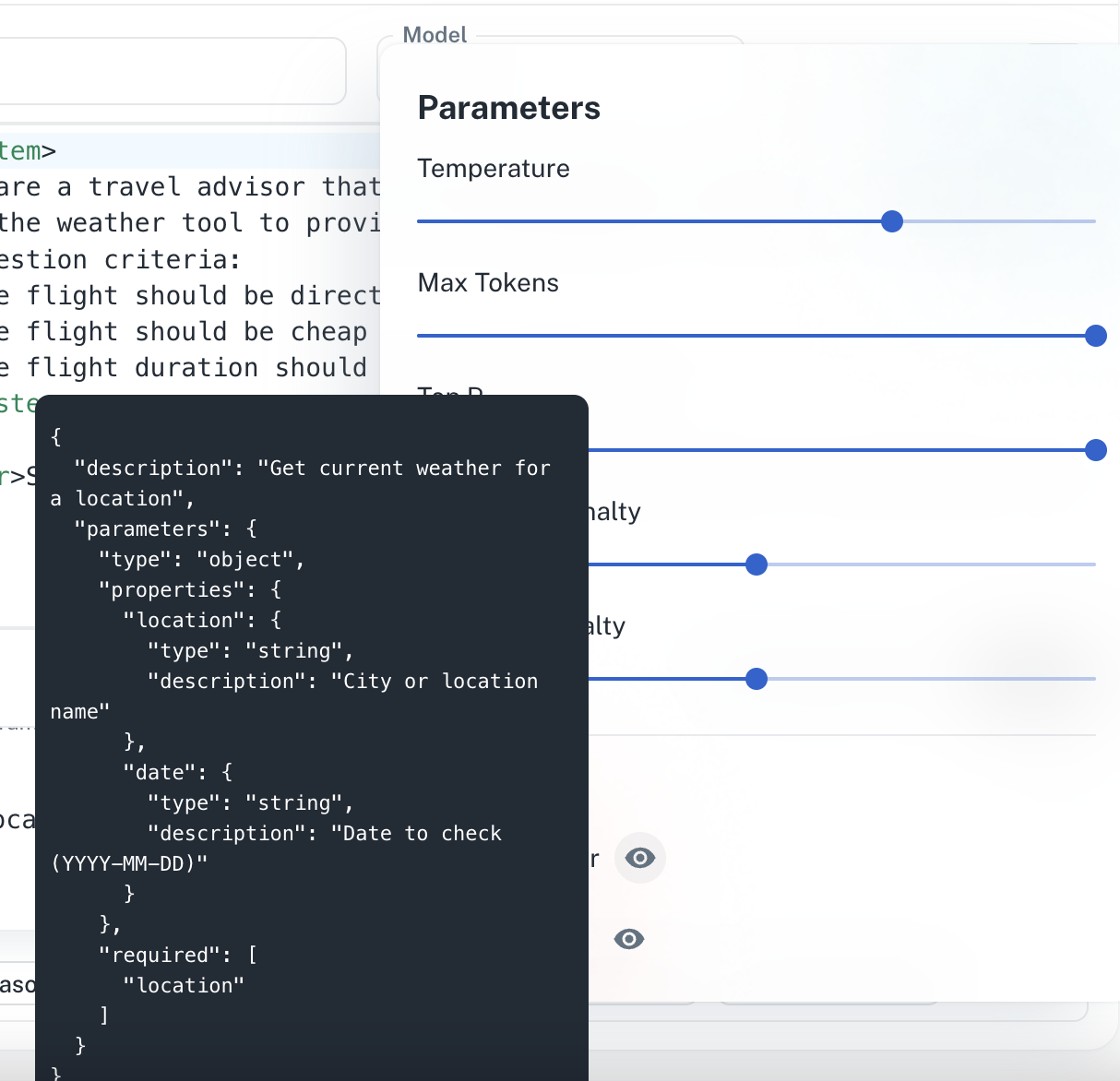

Testing agents in the Dashboard

Run agents directly in the Dashboard to see how they use tools in real time:

The agent panel shows each step the model takes: the tool it called, the arguments it passed, the tool’s response, and the model’s next move. You can inspect the full tool-call trace without leaving the editor.

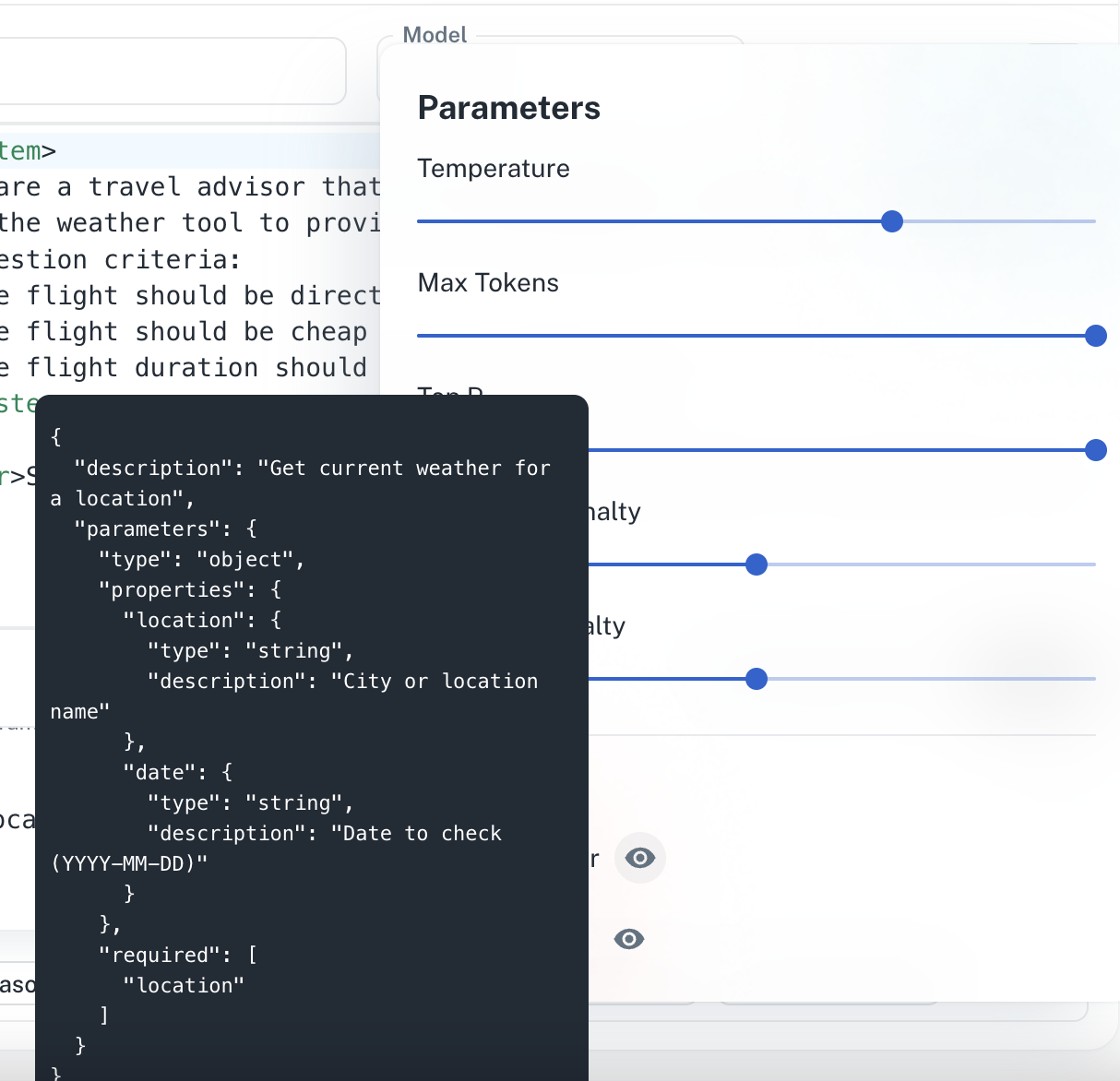

View configured tools and their schemas:

The agent panel shows each step the model takes: the tool it called, the arguments it passed, the tool’s response, and the model’s next move. You can inspect the full tool-call trace without leaving the editor.

View configured tools and their schemas:

The tool-schema panel lists every tool referenced in your prompt’s frontmatter, with its description and the full Zod-derived JSON schema for its inputs.

The tool-schema panel lists every tool referenced in your prompt’s frontmatter, with its description and the full Zod-derived JSON schema for its inputs.

Best practices

- Keep tools focused on a single responsibility.

- Provide clear descriptions to help the LLM use tools appropriately.

- Handle errors gracefully and return informative error messages.

- Use descriptive parameter names and include helpful descriptions.

Have Questions?

We’re here to help! Choose the best way to reach us: