AgentMark uses OpenTelemetry to provide distributed tracing for your prompt executions. This gives you complete visibility into how your prompts perform in production.Documentation Index

Fetch the complete documentation index at: https://docs.agentmark.co/llms.txt

Use this file to discover all available pages before exploring further.

Developers set up tracing in your application. See Tracing setup for setup instructions.

Understanding traces

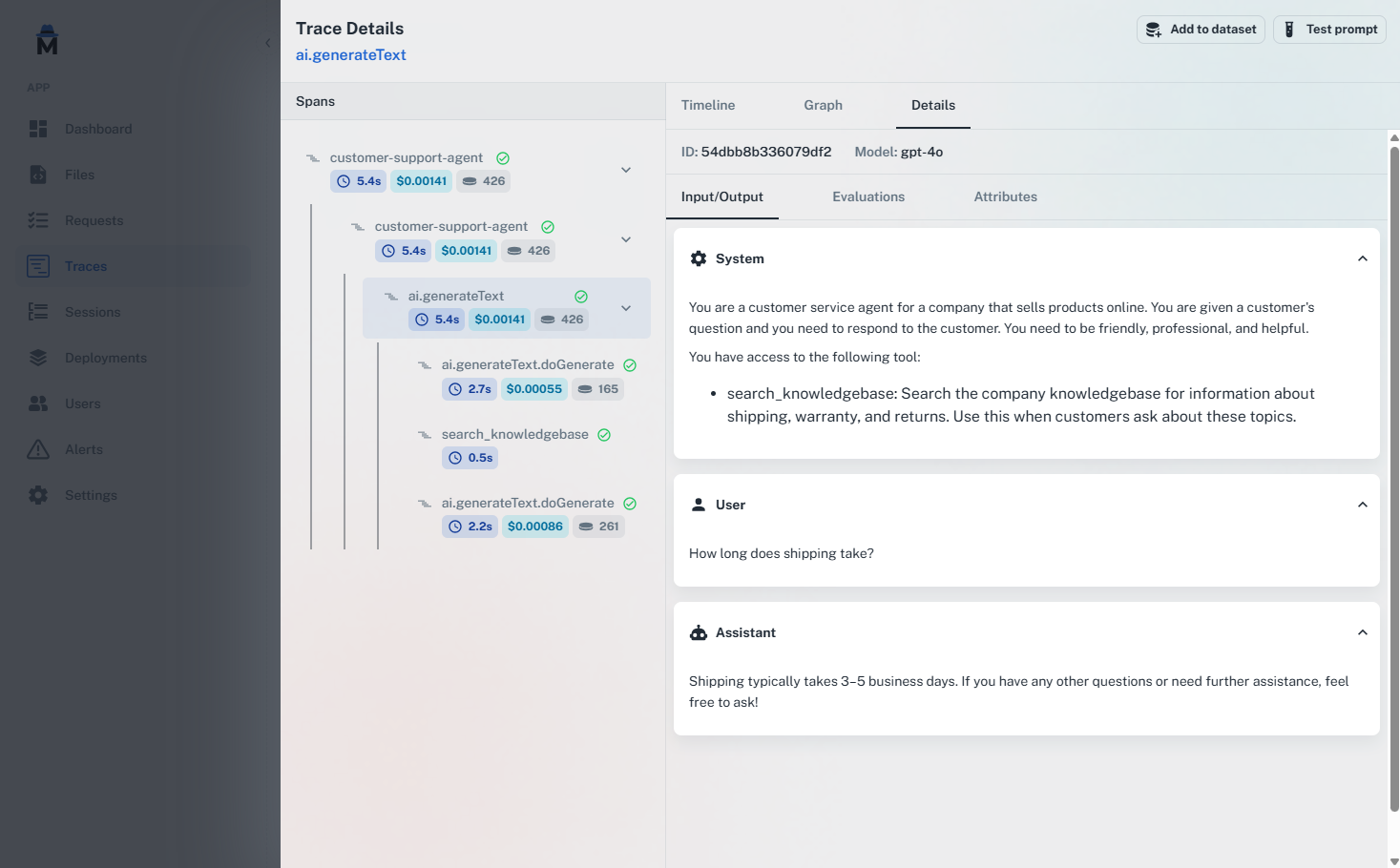

A trace represents the complete execution of a prompt, including all its steps, tool calls, and metadata. Each trace contains: Execution timeline — See exactly when each step occurred and how long it took. Token usage — Track input tokens, output tokens, and total tokens consumed. Costs — Monitor spending on a per-request basis. Tool calls — View all tool executions, their parameters, and results. Custom metadata — Add context like user IDs, session IDs, and custom attributes. Error information — Detailed error messages and stack traces when issues occur.Collected spans

AgentMark records the following OpenTelemetry spans:| Span type | Description | Attributes |

|---|---|---|

ai.inference | Full length of the inference call | operation.name, ai.operationId, ai.prompt, ai.response.text, ai.response.toolCalls, ai.response.finishReason |

ai.toolCall | Individual tool executions | operation.name, ai.operationId, ai.toolCall.name, ai.toolCall.args, ai.toolCall.result |

ai.stream | Streaming response data | ai.response.msToFirstChunk, ai.response.msToFinish, ai.response.avgCompletionTokensPerSecond |

Span kinds

Each span carries a semantic kind that categorizes the type of operation it represents. Span kinds affect how spans can be filtered and how analytics are grouped on the dashboard.| Kind | Description |

|---|---|

| function | Generic computation step (default) |

| llm | A call to a language model |

| tool | An external tool or API call |

| agent | An orchestration loop that decides what to do next |

| retrieval | A vector database query or document search |

| embedding | A call to an embedding model |

| guardrail | A content safety or validation check |

observe() — see SpanKind values for implementation details.

LLM span attributes

Each LLM span contains attributes that vary slightly depending on the adapter you use. The table below shows common attributes across integrations:- AI SDK (Vercel)

- Claude Agent SDK

| Attribute | Description |

|---|---|

ai.model.id | Model identifier |

ai.model.provider | Model provider name |

ai.usage.promptTokens | Number of prompt tokens |

ai.usage.completionTokens | Number of completion tokens |

ai.settings.maxRetries | Maximum retry attempts |

ai.telemetry.functionId | Function identifier |

ai.telemetry.metadata.* | Custom metadata |

ai.response.text | Response text |

ai.response.toolCalls | Tool calls array |

ai.response.finishReason | Finish reason |

agentmark.metadata.* attributes.

Grouping traces

Organize related traces together using custom grouping. This is useful for understanding complex workflows that span multiple prompt executions.

Viewing traces

View traces in your local dev server athttp://localhost:3000 or in the AgentMark Dashboard under the Traces tab — both render the same trace explorer (execution timeline, span tree, graph view, and per-span attribute drill-down). Each trace shows:

- Complete prompt execution timeline

- Tool calls and their durations

- Token usage and costs

- Custom metadata and attributes

- Error information (if any)

- Graph visualization (when graph metadata is present)

- Manual annotations for quality assessment

Filtering and search

AgentMark provides powerful filtering across all trace dimensions — model, status, latency, cost, tokens, metadata, scores, and more. Filters can be combined, saved as views, and shared via URL. Learn more about filtering and searchIntegration

AgentMark works with any application that uses OpenTelemetry. For detailed setup instructions, see Tracing setup.MCP trace server

For debugging traces directly from your IDE, AgentMark provides an MCP server that exposeslist_traces and get_trace tools. This lets you query and inspect traces without leaving your development environment.

Traces and spans API

You can query traces and spans programmatically using the REST API or the CLI. Both the local dev server and the AgentMark Cloud gateway expose/v1/traces, /v1/traces/{traceId}, and /v1/spans, so you can develop against local data and switch to Cloud without changing your integration. Bulk export (/v1/traces/export) is Cloud-only.

- Local REST

- REST API (Cloud)

- REST API (local)

Cross-trace span search

TheGET /v1/spans endpoint lets you search spans across all traces in your project. Unlike the traces API, which returns traces and their nested spans, the spans endpoint queries individual spans directly — regardless of which trace they belong to.

This is useful when you need to:

- Find all LLM calls using a specific model across your entire project

- Identify slow operations by filtering on duration thresholds

- Audit error spans across traces without browsing each trace individually

- Analyze usage patterns for a particular span type (e.g., all

GENERATIONspans)

| Parameter | Description |

|---|---|

type | Span type: GENERATION, SPAN, or EVENT |

status | Span status: UNSET, OK, or ERROR |

name | Partial match on span name |

model | Partial match on model name |

min_duration | Minimum duration in milliseconds |

max_duration | Maximum duration in milliseconds |

limit | Results per page (1-500, default 100) |

offset | Pagination offset |

- Local REST

- REST API

traceId, so you can drill into the full trace for any span that matches your search.

Best practices

- Use meaningful IDs — Choose descriptive function IDs for easy filtering and debugging.

- Add context — Include relevant metadata like user IDs, session IDs, and business context.

- Monitor regularly — Check traces frequently to catch issues early.

- Set up alerts — Configure alerts for cost, latency, or error thresholds.

- Analyze patterns — Use the Dashboard’s filtering to identify trends and patterns.

Next steps

Sessions

Group related traces together

Alerts

Get notified of critical issues

Annotations

Manually label and score traces

Tracing setup

Integrate observability in your app

Have Questions?

We’re here to help! Choose the best way to reach us:

- Email us at hello@agentmark.co for support

- Schedule an Enterprise Demo to learn about our business solutions