Cloud feature. Annotations are available in the AgentMark Dashboard.

- Inline annotation — score a single trace directly from the trace drawer

- Annotation queues — batch traces into structured review queues with assignment, progress tracking, and multi-reviewer support

When to use human annotation

| Use case | Example | Workflow |

|---|---|---|

| Quality audits | Review a sample of production traces for correctness and tone | Create a queue, add traces, assign to domain experts |

| Edge case triage | Flag and investigate unexpected model behavior | Inline annotation from the trace drawer |

| Dataset curation | Build high-quality test datasets from real production data | Review in queue, save passing traces to a dataset |

| Calibrate automated evals | Align your LLM-as-judge scorers with human judgment | Score the same traces manually that your evals score, compare results |

| Multi-reviewer consensus | Get independent assessments from multiple team members | Set reviewers required > 1 on a queue |

Score types

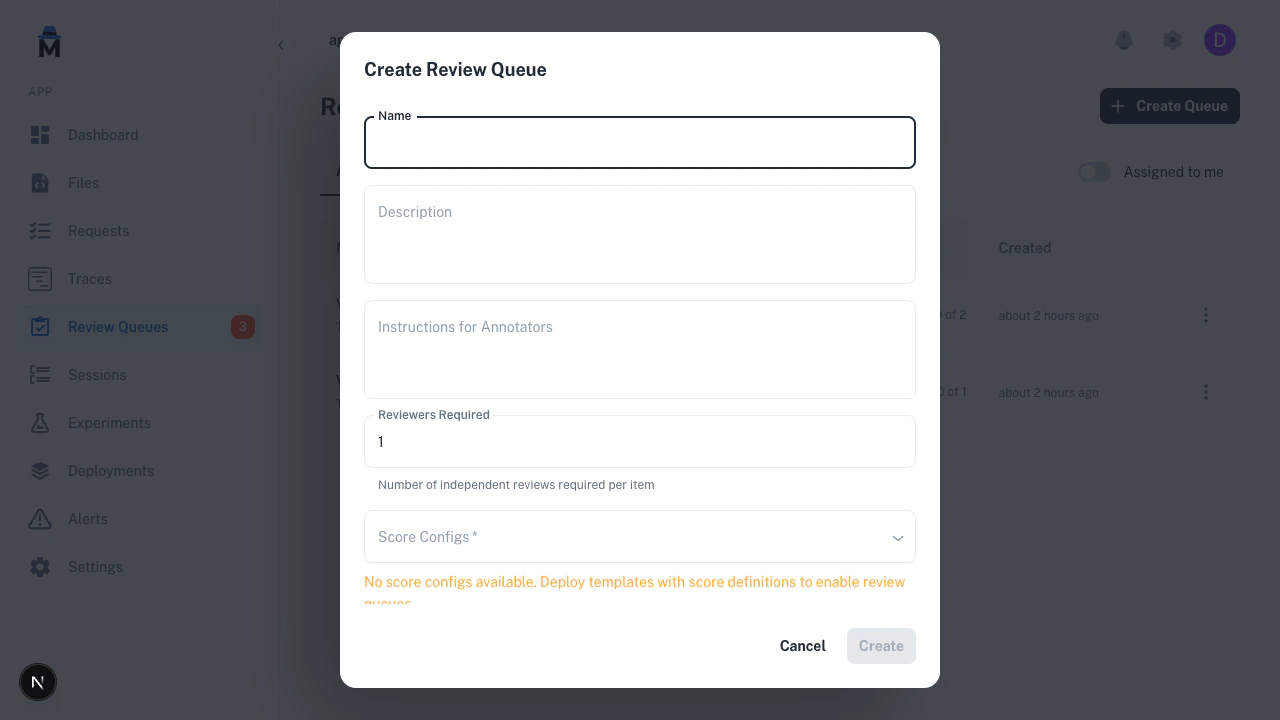

Score configs define what reviewers score on. They are declared in youragentmark.json file under the scores field and persisted to the platform database on agentmark deploy. When creating a queue, you select which score configs to include.

Score configs must be deployed before you can create a queue. Run

agentmark deploy after adding scores to your agentmark.json. Once deployed, score configs are always available in the dashboard — no worker dependency required. See Project configuration for the scores schema and Evaluations for adding automated eval functions.- Boolean (pass/fail)

- Numeric (scale)

- Categorical (labels)

A binary judgment. The reviewer clicks Pass or Fail.Saved as score

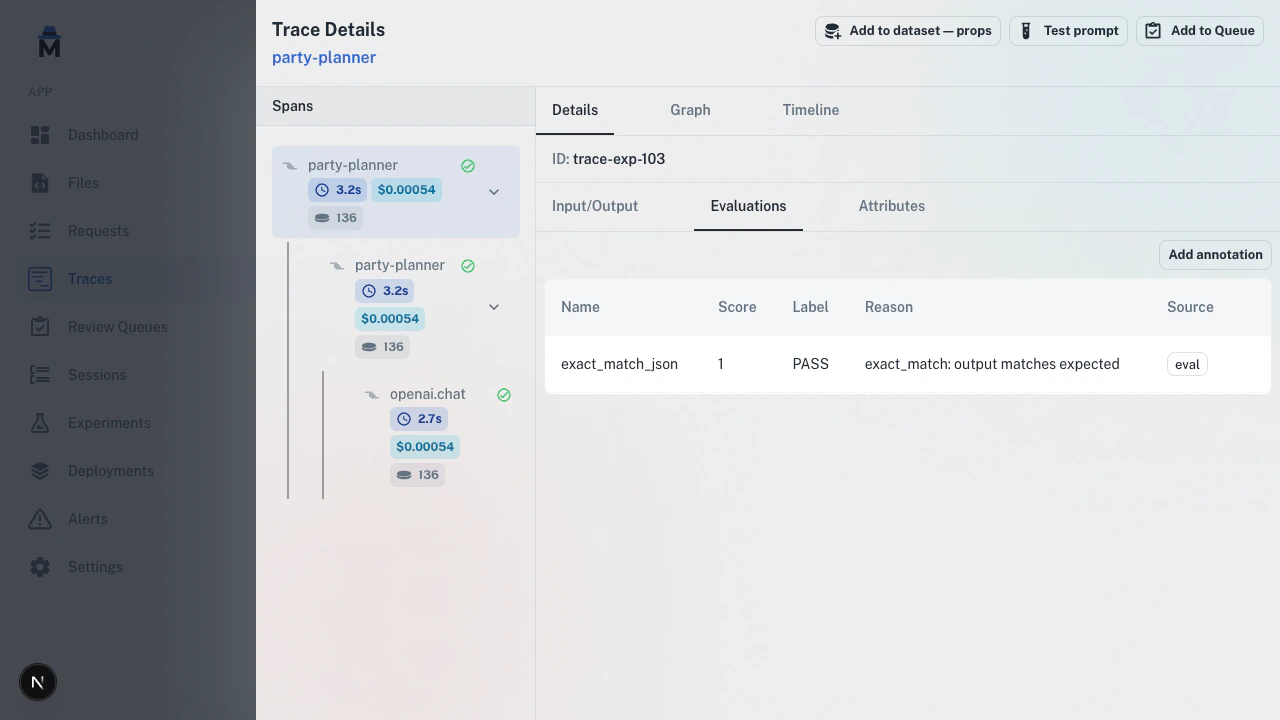

1 (pass) or 0 (fail). Best for clear-cut criteria: “Is the response factually correct?”Inline annotation

Add a score to any trace directly from the trace drawer — no queue required.

Inline annotations appear alongside automated eval scores, distinguished by an “annotation” badge.

Annotation queues

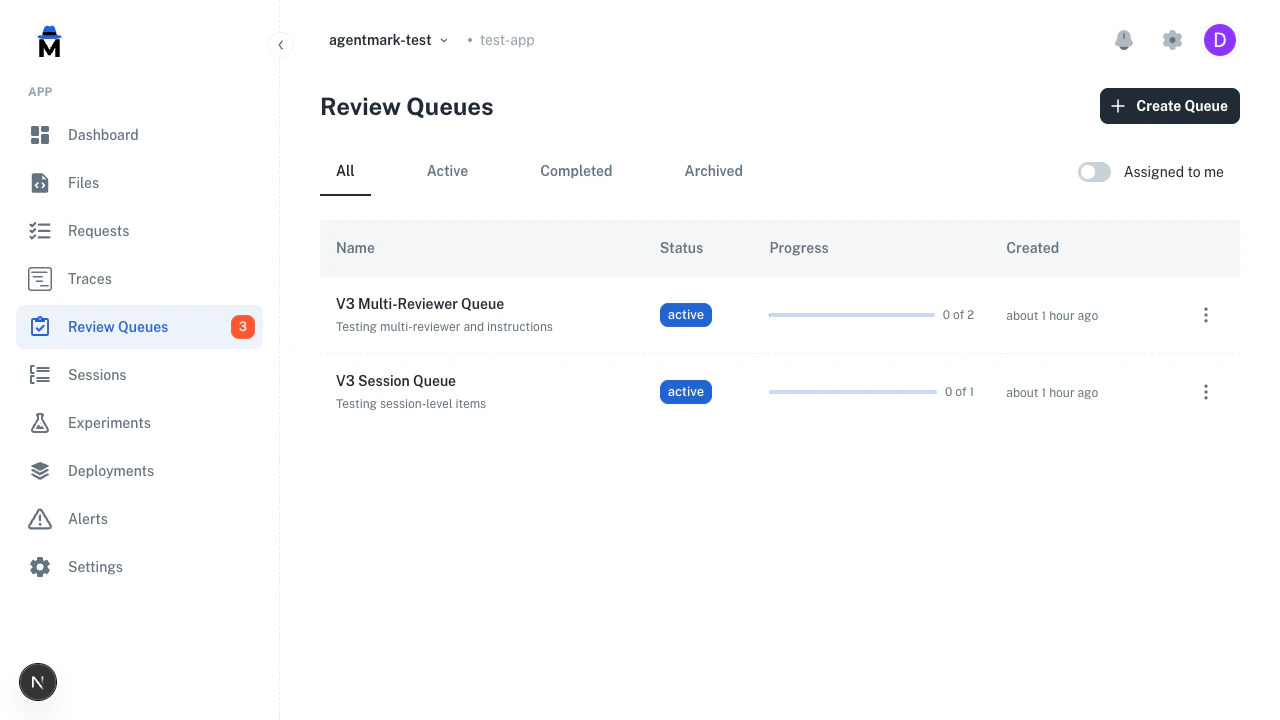

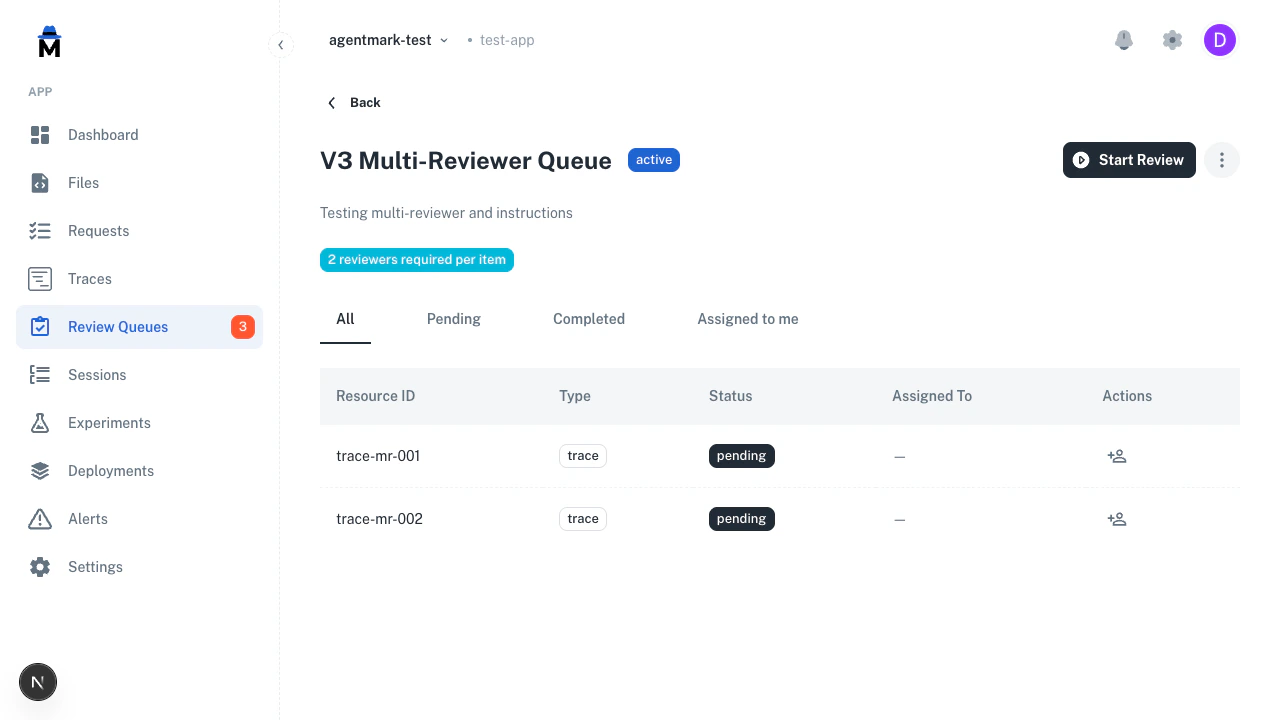

For batch review, use annotation queues. Queues let you organize items, assign reviewers, track progress, and require multiple independent reviews.

Create a queue

Navigate to Review Queues in the sidebar and click Create Queue.

| Field | Required | Description |

|---|---|---|

| Name | Yes | Descriptive name for the review batch |

| Description | No | Context for what this queue covers |

| Instructions for annotators | No | Guidance shown during review (e.g., “Mark PASS if factually correct and professional”) |

| Reviewers required | Yes | Independent reviews needed per item (default: 1) |

| Score configs | Yes | Which scoring dimensions to show during review |

| Default dataset | No | Pre-selects a dataset for the “Save to dataset” action |

Add items

- Bulk from traces

- Individual spans

- From experiments

- Via API

Queue detail

Click any queue to see its items, progress, and reviewer assignments.

| Tab | Shows |

|---|---|

| All | Every item in the queue |

| Pending | Items waiting for review |

| Completed | Reviewed or skipped items |

| Assigned to me | Items assigned to you |

Review workflow

Click Start Review to begin. The review view splits into two panels. Left panel — trace content:- Metadata bar with trace name, latency, cost, tokens, and model

- Root span input/output formatted as JSON

- Expandable spans tree — click any span to see its I/O

- For session items, a conversation timeline showing all turns

- Annotator instructions (collapsible, from queue config)

- Score controls for each configured dimension

- Prior annotations on this resource (read-only)

- Save to dataset section with auto-extracted I/O

| Action | Shortcut | What it does |

|---|---|---|

| Complete + Next | Enter | Save scores, mark complete, advance |

| Skip | — | Mark as skipped, advance |

| Back | — | Return to queue detail |

Multi-reviewer

When reviewers required is set above 1, each reviewer annotates independently:- The review header shows a progress badge (e.g., “0/2 reviewed”) tracking how many reviewers have completed their assessment

- Each reviewer sees their own fresh annotation form — they don’t see other reviewers’ scores while annotating

- An item is only marked complete when the required number of independent reviews is reached

- The

/nextendpoint automatically skips items the current reviewer has already reviewed, so each reviewer only sees items they haven’t scored yet

Resource types

Queues support three item types:| Type | When to use | What the reviewer sees |

|---|---|---|

| Trace | Review a complete request | Full trace with expandable per-span I/O |

| Span | Review a single LLM call or tool invocation | Individual span content |

| Session | Review a multi-turn conversation | Conversation timeline across traces |

Programmatic queue management

All queue operations are available via the REST API. Use these to integrate annotation into your CI pipelines or automated workflows.Create a queue

Create a queue

Add items to a queue

Add items to a queue

Get next item for review

Get next item for review

Update item status or assignment

Update item status or assignment

End-to-end example: dataset curation

A common workflow is using annotation queues to curate high-quality datasets from production traces.Create a queue

Create a queue with a boolean score config (e.g.,

dataset_quality) and set the default dataset to your target dataset.Add production traces

Go to Traces, filter to interesting traces (errors, low automated scores, specific prompts), select them, and add to the queue.

Review and score

Click Start Review. For each trace, read the I/O, mark Pass or Fail, and optionally edit the input/output before saving to the dataset.

Save to dataset

Expand the Save to dataset section, verify the auto-extracted fields, and click Save. The default dataset is pre-selected.

Human annotation vs automated evals

Use both together. They serve different purposes.| Human annotation | Automated evals | |

|---|---|---|

| Created by | Team members in the dashboard | Eval functions during experiments |

| Best for | Subjective quality, edge cases, nuance | Regression testing, scale, consistency |

| Scale | Tens to hundreds of items | Entire datasets |

| When | Anytime, on any trace | During experiment runs |

Related

Evaluations

Automate scoring with eval functions

Datasets

Create and manage test datasets

Experiments

Run prompts against datasets to validate quality

Traces

View and explore trace data

Have Questions?

We’re here to help! Choose the best way to reach us:

- Email us at hello@agentmark.co for support

- Schedule an Enterprise Demo to learn about our business solutions