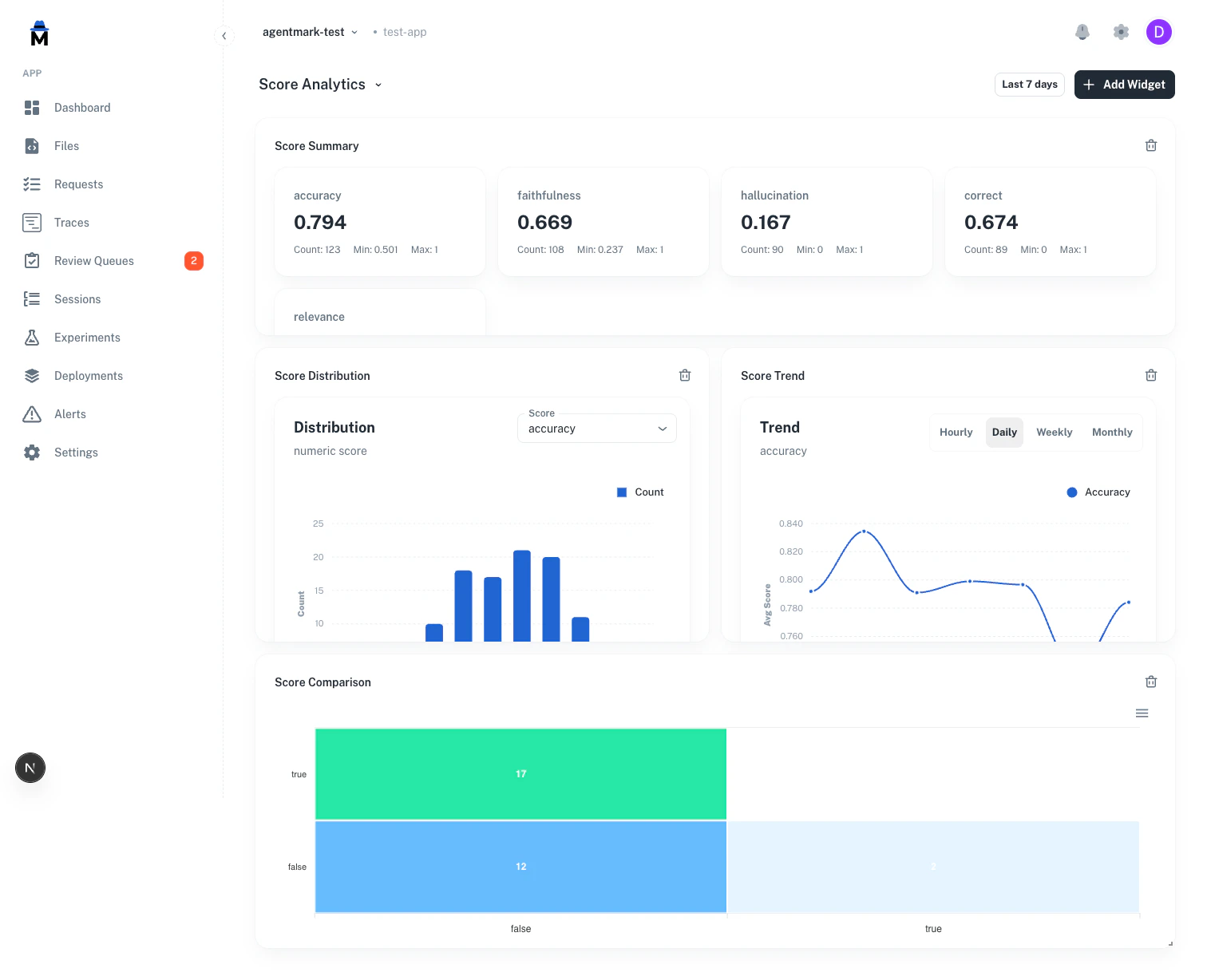

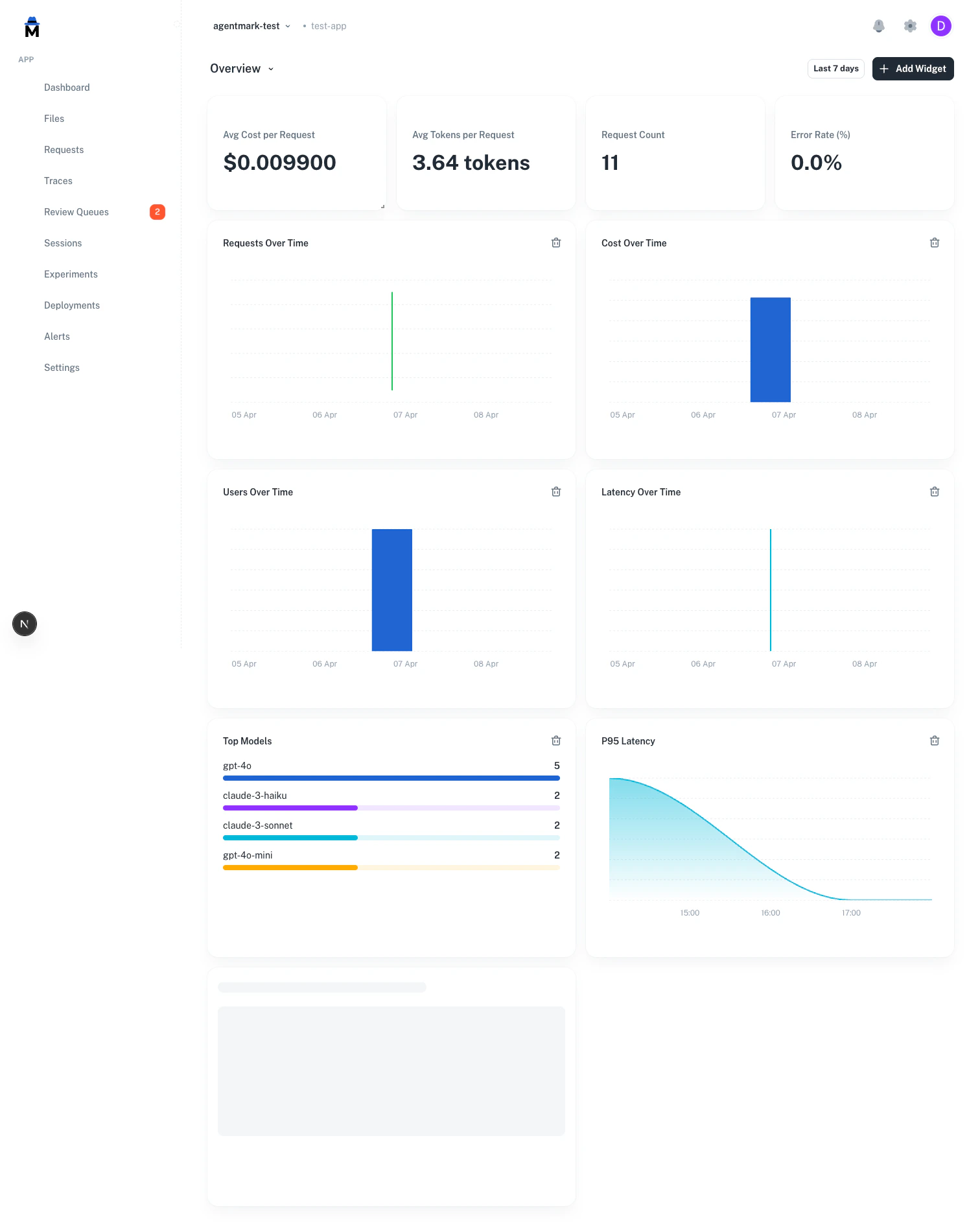

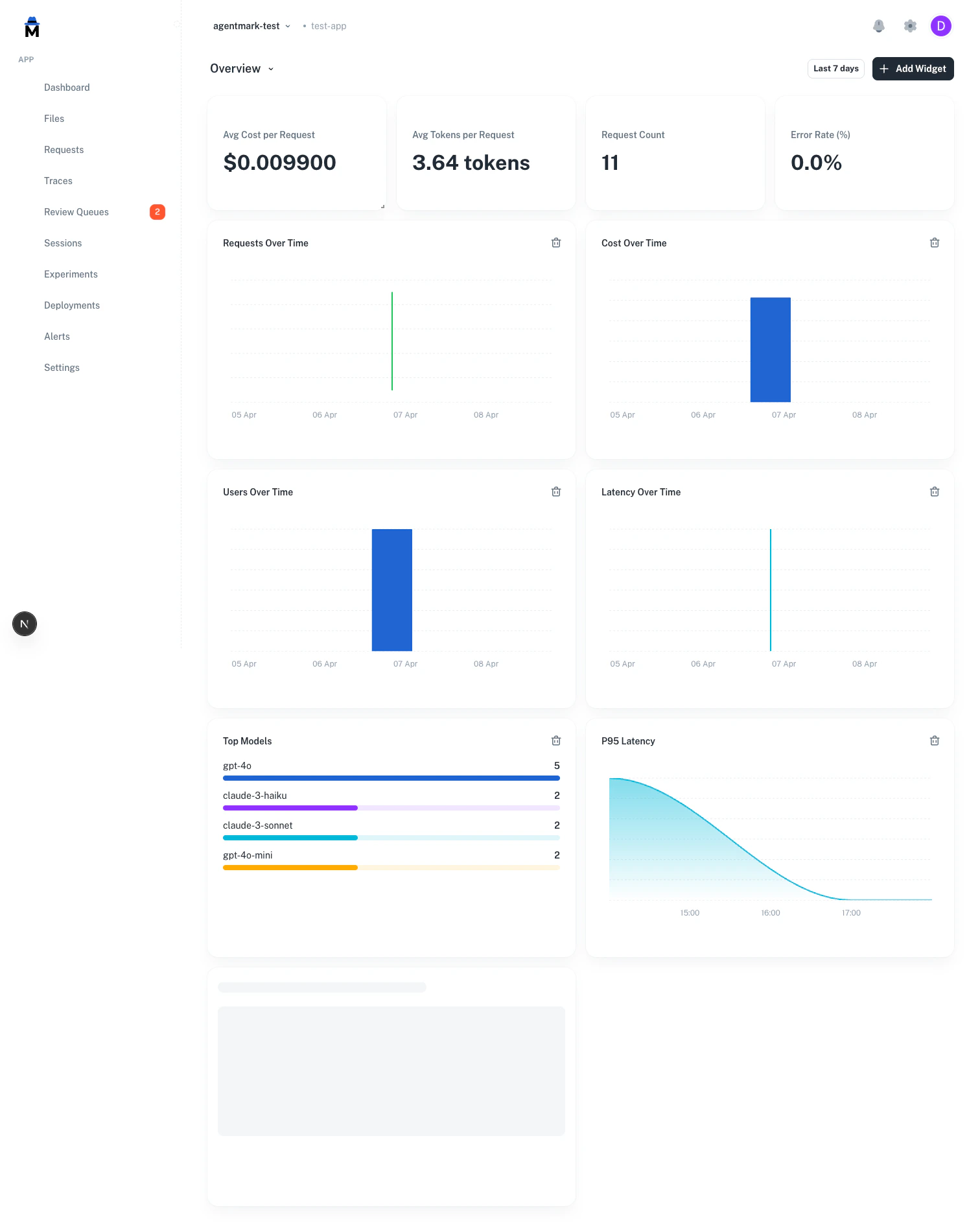

AgentMark Dashboards give you a unified view of your application’s health — operational metrics (cost, latency, tokens, errors), evaluation scores (distributions, trends, cross-score comparison), and custom widgets — all on one page.

Operational metrics

The dashboard automatically tracks key metrics from your prompt executions:

| Category | Metrics |

|---|

| Cost | Total cost, average cost per request, cost by model |

| Latency | Average latency, P50/P95/P99 percentiles, latency trends |

| Tokens | Input tokens, output tokens, total tokens, tokens by model |

| Volume | Request count, error count, error rate, unique users |

| Models | Request count per model, cost per model, top models ranking |

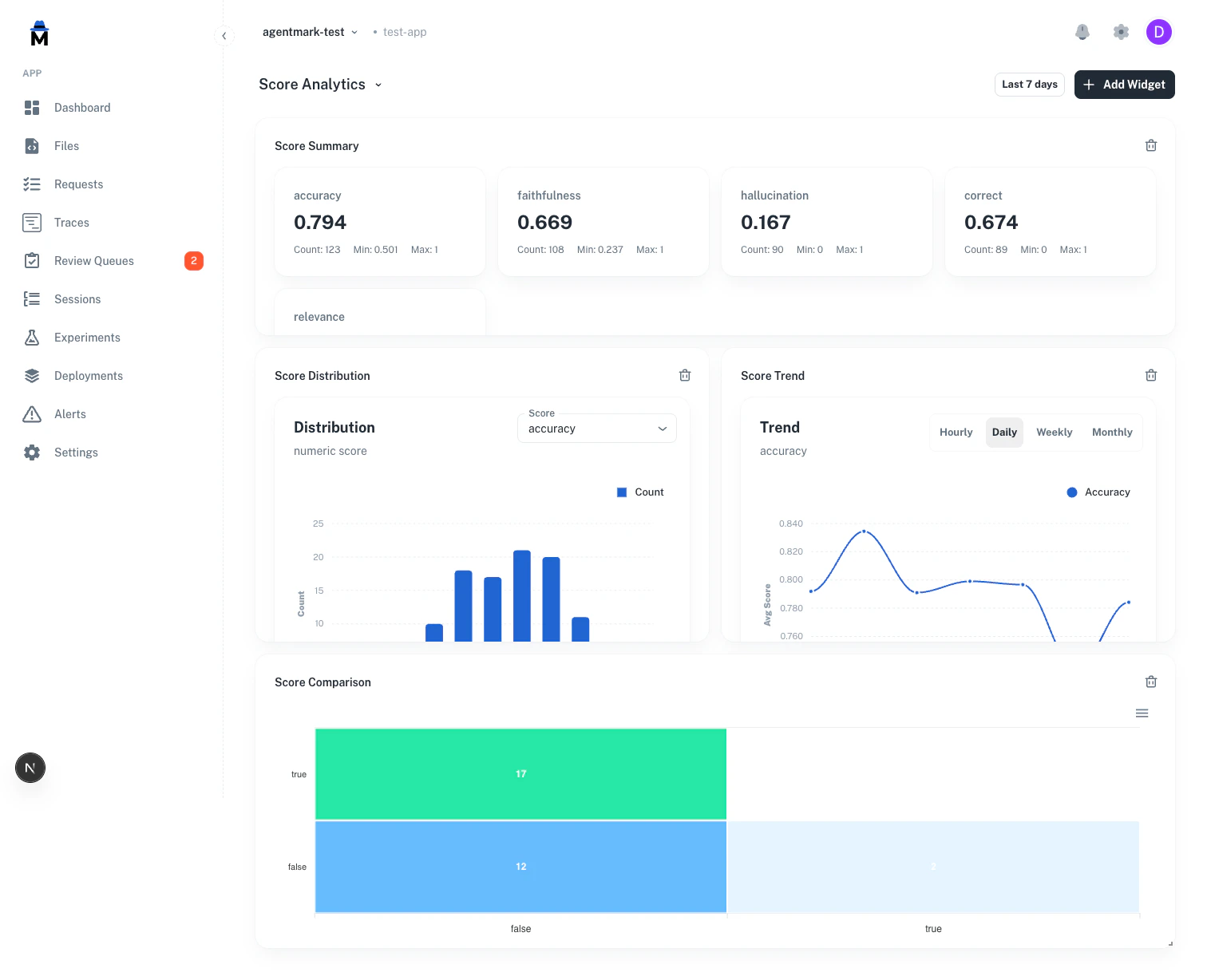

Score analytics

Score analytics are available as dashboard widgets — add them to any dashboard through the “Add Widget” dialog, or start from the Score Analytics template in the template gallery.

Four score widget types are available:

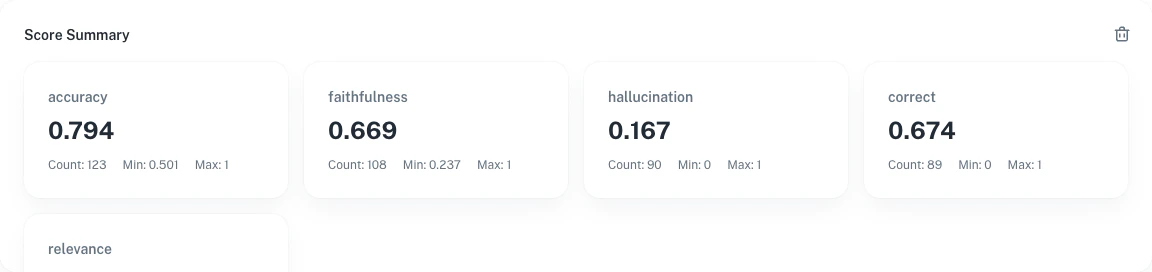

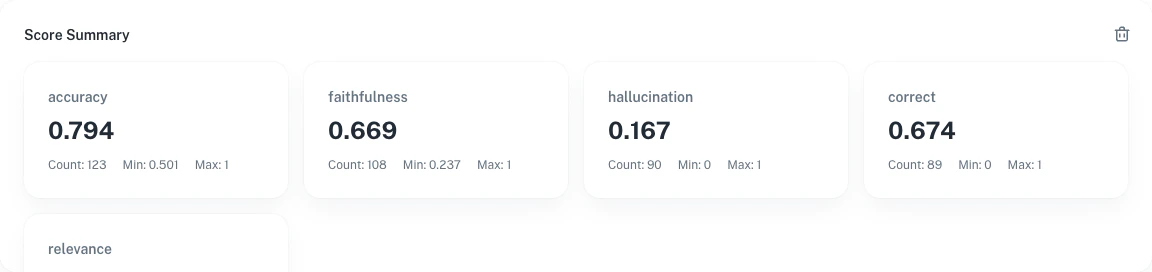

Summary cards

Aggregated statistics for each score name:

- Avg — Mean score value

- Count — Total number of scores recorded

- Min / Max — Range of observed values

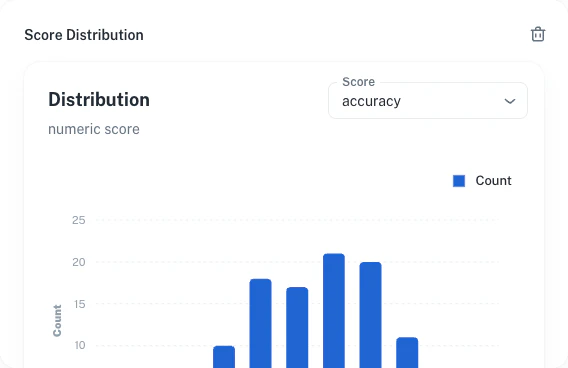

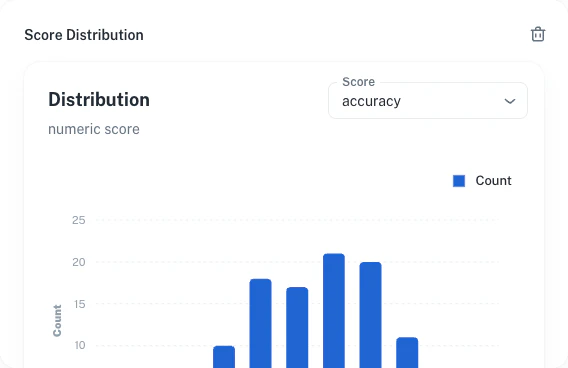

Score distribution

The histogram shows how score values are distributed. AgentMark auto-detects the score type:

- Numeric scores — 10 equal-width bins between min and max

- Categorical scores — Bar chart by category label

- Boolean scores — Two bars for true/false

Trend over time

Average score values over configurable intervals — Hourly, Daily, Weekly, or Monthly.

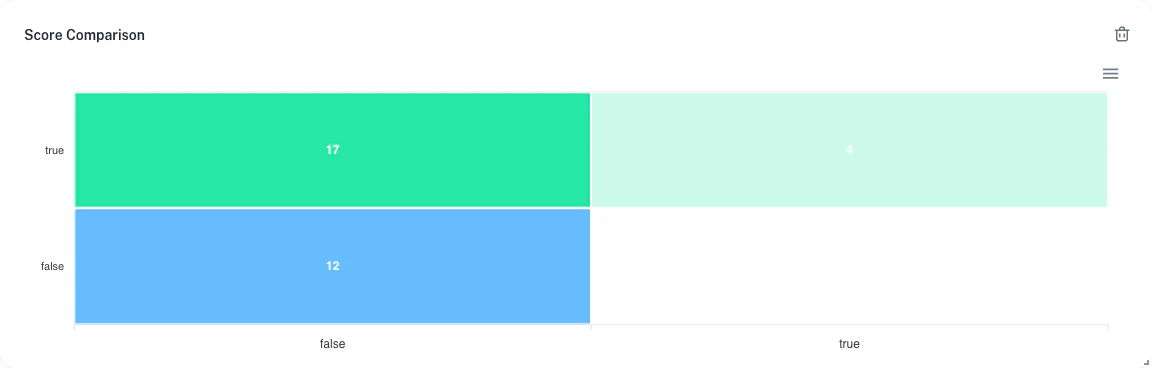

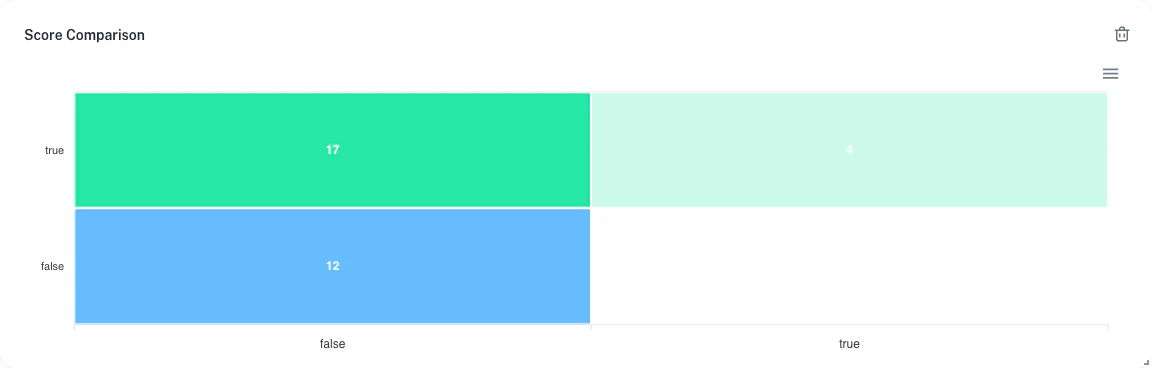

Score comparison

Compare two scores of the same type to see how they align across shared traces:

- Categorical / Boolean — Confusion matrix (N×M heatmap)

- Numeric — Scatter plot with paired values

Both scores must be the same type. Mixing numeric with categorical will show an error. The scatter plot is capped at 10,000 data points for performance.

Score types

| Score type | Detection rule | Distribution | Comparison |

|---|

| Numeric | Float values, no labels | 10-bin histogram | Scatter plot |

| Categorical | String labels (not just true/false) | Category bar chart | N×M confusion matrix |

| Boolean | Labels are only “true” and/or “false” | Two-bar chart | 2×2 confusion matrix |

Widgets

Dashboards are fully configurable with drag-and-drop widgets. Add any mix of operational and score widgets to create the view you need.

Operational widgets (stat card, line, bar, or area chart):

- Request count, error rate, cost, latency, tokens, unique users, model rankings

- Derived metrics: cost/request, tokens/request, success rate, and more

Score widgets:

- Score Summary — aggregated stats for all scores

- Score Distribution — histogram or category chart for a selected score

- Score Trend — trend line over time for a selected score

- Score Comparison — confusion matrix or scatter plot comparing two scores

Available metrics

Volume: request_count, unique_users, total_tokens, avg_tokens

Cost: total_cost, avg_cost

Errors: error_count, error_rate

Latency: avg_latency, p50_latency, p95_latency, p99_latency

Rankings: top_models

Adding widgets

- Click + Add Widget in the dashboard header

- Choose a title and metric — operational metrics are under “Built-in” and “Derived”, score metrics are under “Scores”

- For score widgets, enter the score name(s) to track

- Choose a visualization type and optional group-by dimension

- The widget appears on the grid — drag to rearrange

Operational widgets support group-by dimensions (model, user, metadata key), time granularity (hour, day, auto), and filters (model, user ID, status).

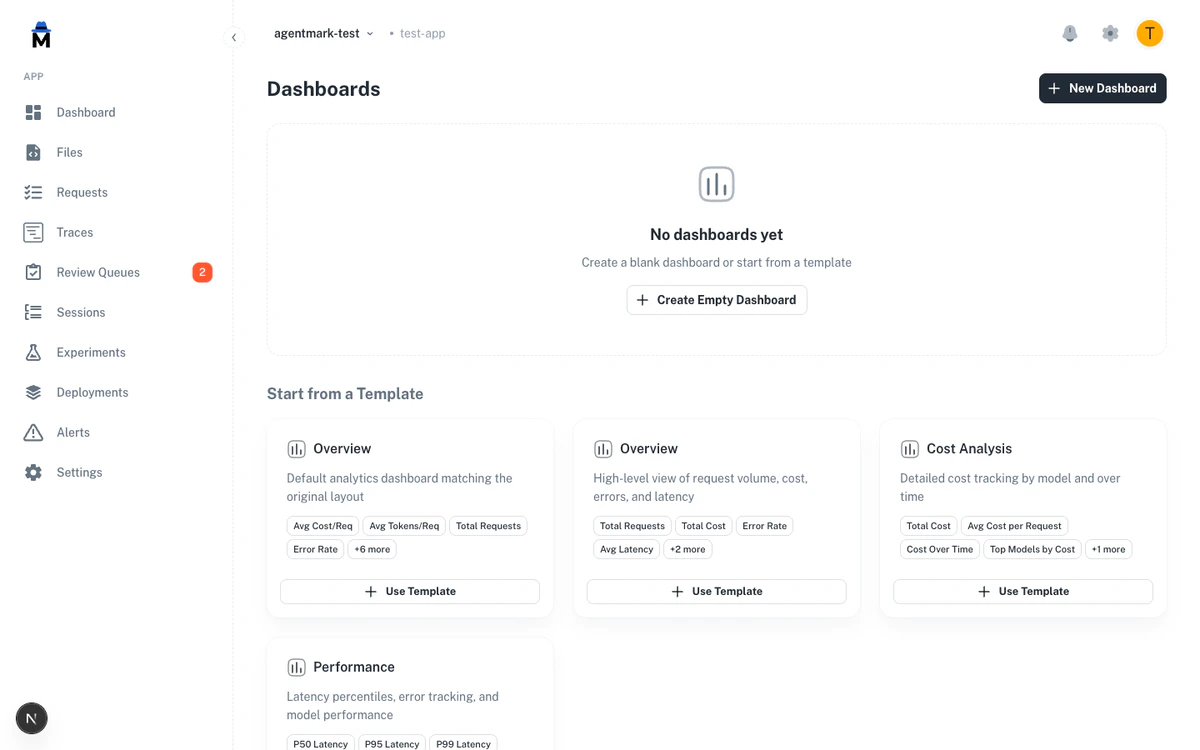

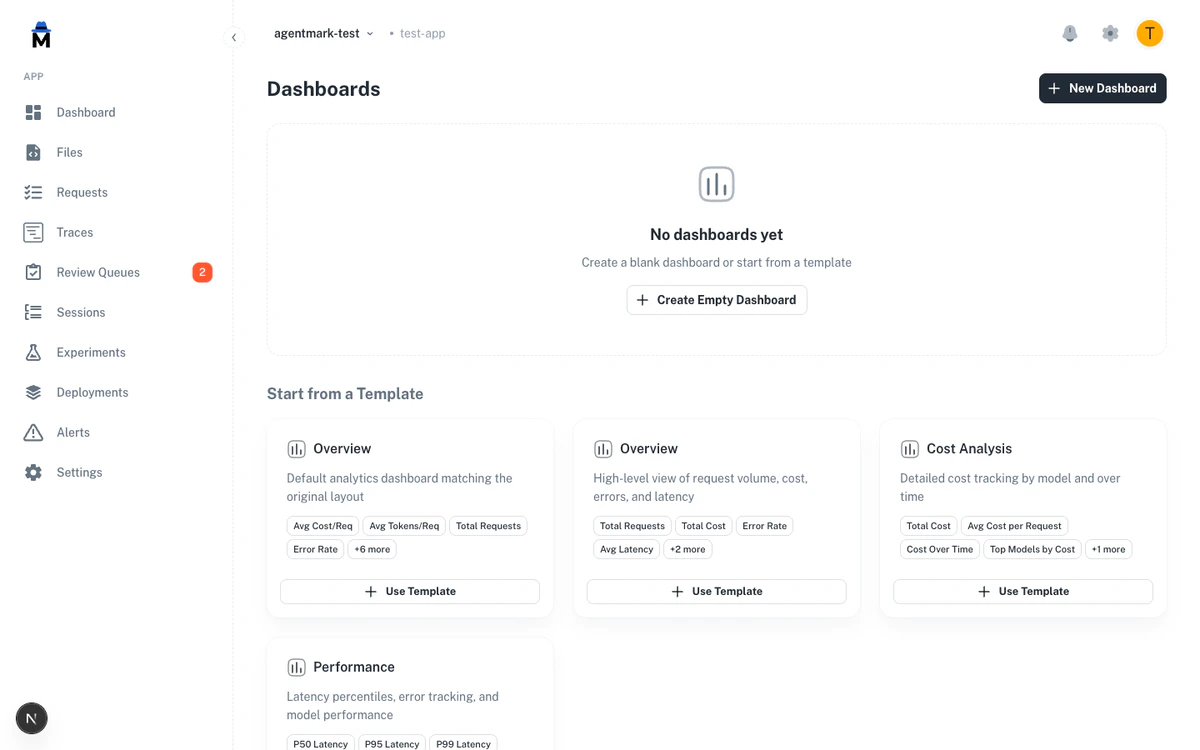

Templates

Start from a pre-built template or create a blank dashboard.

| Template | What it includes |

|---|

| Overview | Request volume, cost, errors, latency — stat cards + time series |

| Cost Analysis | Total cost, avg cost/request, cost over time, top models by cost, tokens |

| Performance | P50/P95/P99 latency, error count, error rate |

| Score Analytics | Score summary, distribution, trend, and comparison widgets |

Dashboard settings

- Default dashboard — mark any dashboard as default to load it when you visit the Dashboard page

- Time range — global selector (24h, 7d, 30d, 90d) applies to all widgets and the score analytics section

- Limits — up to 10 dashboards per app, maximum 20 widgets per dashboard

Score analytics API

Score data is available via four API endpoints. All require authentication and an appId parameter.

GET /api/analytics/scores/histogram

Returns bucketed distribution data for a score.

| Parameter | Required | Description |

|---|

appId | Yes | Application ID |

name | Yes | Score name |

range | No | Date range preset (default: 7d) |

startDate | If custom | Start date for custom range |

endDate | If custom | End date for custom range |

source | No | Filter by source (eval or annotation) |

GET /api/analytics/scores/trend

Returns time-series trend data for a score.

| Parameter | Required | Description |

|---|

appId | Yes | Application ID |

name | Yes | Score name |

interval | No | hour, day, week, month (default: day) |

range | No | Date range preset (default: 7d) |

startDate | If custom | Start date for custom range |

endDate | If custom | End date for custom range |

source | No | Filter by source (eval or annotation) |

GET /api/analytics/scores/comparison

Returns confusion matrix data comparing two categorical/boolean scores.

| Parameter | Required | Description |

|---|

appId | Yes | Application ID |

nameA | Yes | First score name |

nameB | Yes | Second score name |

range | No | Date range preset (default: 7d) |

startDate | If custom | Start date for custom range |

endDate | If custom | End date for custom range |

source | No | Filter by source (eval or annotation) |

GET /api/analytics/scores/scatter

Returns paired numeric score values for scatter plot. Both scores must be numeric.

| Parameter | Required | Description |

|---|

appId | Yes | Application ID |

nameA | Yes | First score name (numeric) |

nameB | Yes | Second score name (numeric) |

range | No | Date range preset (default: 7d) |

startDate | If custom | Start date for custom range |

endDate | If custom | End date for custom range |

source | No | Filter by source (eval or annotation) |

The scatter endpoint returns a maximum of 10,000 paired data points. A “Sampled” indicator appears in the UI when the cap is reached.

Have Questions?

We’re here to help! Choose the best way to reach us: